Been too sick to do anything but play Stardew Valley lately, but hey, I’ve never gotten past day 20 before, and now I’m having a lovely time :)

A little while back, a lovely person reached out and asked for some advice. After some interesting discussion, they asked if I had any good resources that helped me improve as an engineer. I feel like my reply might be more helpful generally; here it is.

I’ve been thinking on your question for a while; it’s hard to pick out single things, but here’s a start after going through my bookmark archive:

- https://www.infoq.com/presentations/Simple-Made-Easy/ — I very rarely watch talks, I have bad hearing/audio processing and find them really inefficient besides. (Same goes for podcasts; I’d rather read something and be able to scan through it or search it; I’m not spending an hour listening to you and some other guy with impossible accents mumble interrupted only by ads for Audible.) But this, this one I have watched, quite a few times, and recommended it a lot back when it was new (2011). You don’t need to know or care about Clojure to get the most out of this.

- https://prog21.dadgum.com/ — this guy knows what’s good. Consider starting with any interesting looking titles from the lists on the homepage, and then scan the archive.

- https://blog.regehr.org/archives/942 — random good post. At the end he says: “Finally, we probably need to do more code reading in class.” I would emphasise this. Read much more. Get very, very comfortable diving into new codebases to add tiny features, fix bugs, or to diagnose/understand why something happened the way it did. (Nix is an actual super-power when it comes to just diving into new environments, and is very worth the effort to grok, for so many reasons, despite its steep learning curve. Here is a post on Just Diving In to something, when I decided to replace the Markdown library at the core of a Fediverse/ActivityPub implementation with my own. Here is another one where we add our very own bespoke feature to git, in a way where we get to keep it forever, even with upgrades, new machines, everything.)

- https://ferd.ca/lessons-learned-while-working-on-large-scale-server-software.html — another random good post, and Ferd writes a lot of other good stuff too; scan the archive.

- https://www.youtube.com/watch?v=F785myaMdR8 — another talk, this one from last year instead of last decade. Dude has a big personality, and some of the time you might wonder why he loves the word “modality” so damn much, but he gets at something important — something increasingly lost as folks continue to drink the “we’ll all be prompt engineers in a few years” Koolaid while trying very hard to ignore the material reality of “but what’s going to be funding this” and the more abstract reality of “perhaps abdicating thousands of tiny decisions to an RNG is not, strictly speaking, the best way to improve our ability to construct systems we understand and can maintain”.

- https://ratfactor.com/papers/naur1 — further to that last point, this is a (commentary on a) classic text by Peter Naur, who diverged from the (then more dominant) idea that programming was a kind of mathematics. The text is linked at that first dot-point. I’d suggest reading the commentary/summary first, and then the original if your interest is sufficiently piqued.

Outside learning resources like this, I’d suggest:

- Get really comfortable with using your VCS. Learn to do black magic with git, and learn to do it fast. This will probably take a lot of aliases — scroll down to the “Git aliases” section on this page to see what I mean. You’ll write bad quality commit messages like “update project files” if you have to type out “git add blah” and “git commit” and “git push” every time you commit, and you’ll make larger, less useful, less self-contained commits too. If staging just what you want and committing and pushing it as as simple as “a”, “c”, “w”, you’ll do it more often. If amending a commit is as easy as “cx”, and reordering commits is as easy as “ri HEAD~10”, you’ll do it more. This is a basic observation from linguistics: more commonly used words/signs evolve to become shorter/simpler. Apply this to your own environment: see what you use most (check your shell history! pipe it into sort/uniq and find out what you do most!), create aliases for those, and retrain yourself to use them. (e.g. with git, create an alias for

git itself that just instead echos “bad! use the new aliases!”, and after a dozen or so tries you’ll get it. You can apply this technique in lots of places where your muscle memory would otherwise prevent you from adopting an improved/different workflow; see also unbinding keys in your editor.)

- I’d suggest learning jujutsu, too; per that README, it’s what I use 100% of the time now, as an interface to git repositories! This post contrasts them a bit, and this one in a specific case.

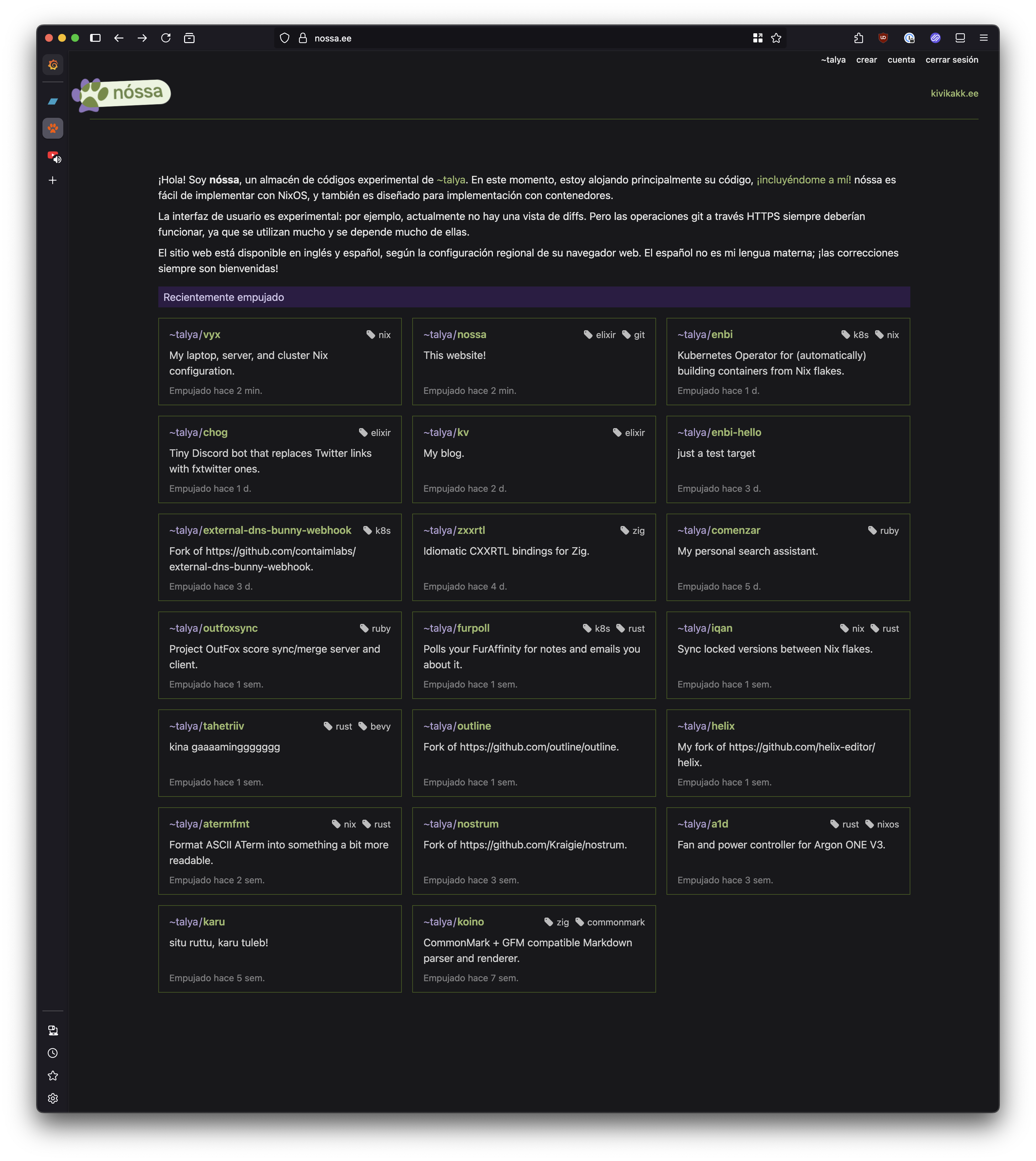

- Per above, Nix is a wonderful way to accumulate learnings and improvements in a way that is version-controlled and reproducible.

I hope these links and ideas prove enlightening, or at least interesting!